Your First Weather Radar in 60 Seconds#

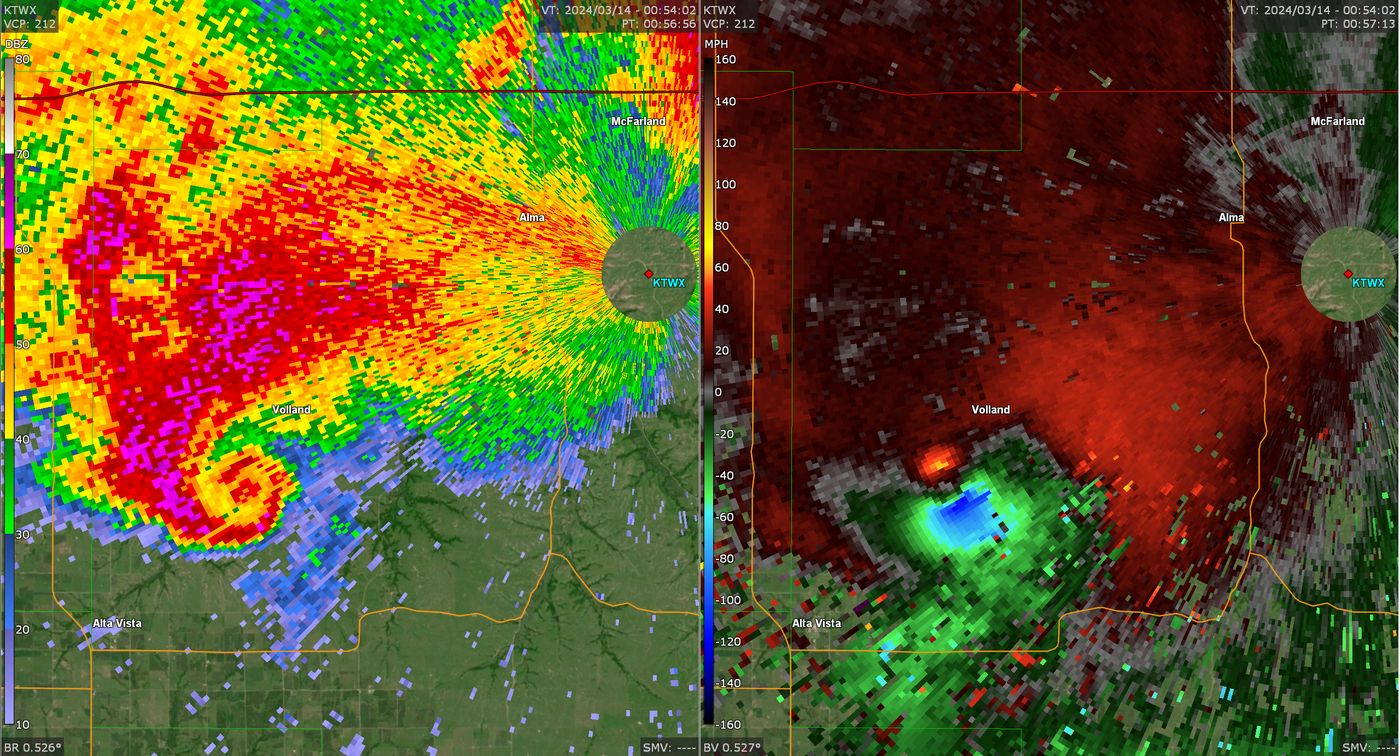

Source: National Weather Service, Federal Aviation Administration & United States Air Force — NEXRAD KTWX. Public Domain.

The 5-Second Challenge#

Watch how fast we can connect to 92 GB of radar data. No downloads, no file iteration.

Tip

The timer below shows metadata loading time. Data streams on-demand only when you need it.

# Core libraries

# For geographic context map

import cartopy.crs as ccrs

import cartopy.feature as cfeature

import cmweather # noqa: F401 - Radar-specific colormaps

import icechunk as ic # Cloud-native versioned storage

import matplotlib.pyplot as plt

import numpy as np

import xarray as xr

# Connect to NEXRAD KLOT data on Open Storage Network

# This is publicly accessible—no credentials needed!

storage = ic.s3_storage(

bucket="nexrad-arco",

prefix="KLOT-RT",

endpoint_url="https://umn1.osn.mghpcc.org",

anonymous=True,

force_path_style=True,

region="us-east-1",

)

# Open the repository and create a read-only session

repo = ic.Repository.open(storage)

session = repo.readonly_session("main")

print("✓ Connected to repository: nexrad-arco/KLOT-RT")

print("✓ Session opened on branch: main")

✓ Connected to repository: nexrad-arco/KLOT-RT

✓ Session opened on branch: main

%%time

# Open the entire radar archive (lazy loading)

dtree = xr.open_datatree(

session.store,

zarr_format=3,

consolidated=False,

chunks={},

engine="zarr",

max_concurrency=5,

)

CPU times: user 1.06 s, sys: 173 ms, total: 1.23 s

Wall time: 2.3 s

# Check the total dataset size

size_gb = dtree.nbytes / 1024**3

print(f"Connected to {size_gb:.1f} GB of radar data")

print("Metadata loaded in ~3-5 seconds")

print("Data streams on-demand (zero download required)")

Connected to 94.2 GB of radar data

Metadata loaded in ~3-5 seconds

Data streams on-demand (zero download required)

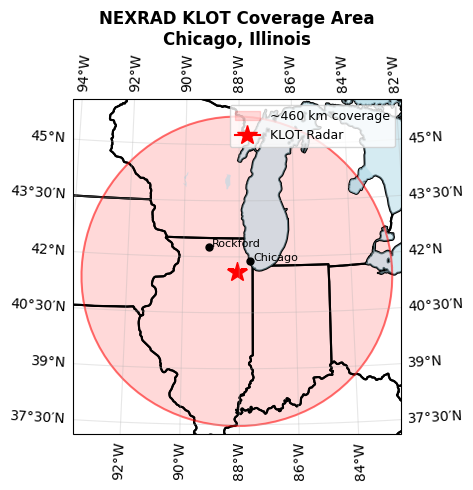

Where Are We Looking?#

KLOT is the NEXRAD station near Chicago, Illinois.

Note

NEXRAD radars scan up to a ~460 km (~285 mile) radius for reflectivity, rotating 360° while tilting at different angles. Doppler velocity coverage is smaller (~230 km) due to range folding constraints.

# KLOT radar location

klot_lat = 41.6044

klot_lon = -88.0847

coverage_radius_km = 460

# Create map showing radar coverage

fig = plt.figure(figsize=(6, 5))

ax = plt.axes(

projection=ccrs.LambertConformal(

central_longitude=klot_lon, central_latitude=klot_lat

)

)

# Set extent: ~500 km around radar

ax.set_extent(

[klot_lon - 5.5, klot_lon + 5.5, klot_lat - 4.5, klot_lat + 4.5],

crs=ccrs.PlateCarree(),

)

# Add geographic features

ax.add_feature(cfeature.STATES, linewidth=1.5, edgecolor="black")

ax.add_feature(cfeature.COASTLINE, linewidth=1)

ax.add_feature(cfeature.LAKES, alpha=0.5, facecolor="lightblue")

# Draw coverage radius using geodesic circle (proper projection)

theta = np.linspace(0, 2 * np.pi, 100)

# Calculate circle points in lat/lon then transform

circle_lons = klot_lon + (coverage_radius_km / 111) * np.cos(theta) / np.cos(

np.radians(klot_lat)

)

circle_lats = klot_lat + (coverage_radius_km / 111) * np.sin(theta)

ax.fill(

circle_lons,

circle_lats,

color="red",

alpha=0.15,

transform=ccrs.PlateCarree(),

label="~460 km coverage",

)

ax.plot(

circle_lons,

circle_lats,

color="red",

linewidth=1.5,

alpha=0.5,

transform=ccrs.PlateCarree(),

)

# Mark radar location

ax.plot(

klot_lon,

klot_lat,

marker="*",

markersize=15,

color="red",

transform=ccrs.PlateCarree(),

label="KLOT Radar",

)

# Add city markers

cities = {"Chicago": (41.8781, -87.6298), "Rockford": (42.2711, -89.0940)}

for city, (lat, lon) in cities.items():

ax.plot(

lon, lat, marker="o", markersize=5, color="black", transform=ccrs.PlateCarree()

)

ax.text(lon + 0.12, lat, city, fontsize=8, transform=ccrs.PlateCarree(), ha="left")

ax.gridlines(draw_labels=True, dms=True, x_inline=False, y_inline=False, alpha=0.3)

ax.legend(loc="upper right", fontsize=9)

ax.set_title(

"NEXRAD KLOT Coverage Area\nChicago, Illinois", fontsize=12, fontweight="bold"

)

plt.tight_layout()

plt.show()

/home/runner/work/radar-datatree/radar-datatree/.venv/lib/python3.12/site-packages/cartopy/io/__init__.py:242: DownloadWarning: Downloading: https://naturalearth.s3.amazonaws.com/10m_cultural/ne_10m_admin_1_states_provinces_lakes.zip

warnings.warn(f'Downloading: {url}', DownloadWarning)

/home/runner/work/radar-datatree/radar-datatree/.venv/lib/python3.12/site-packages/cartopy/io/__init__.py:242: DownloadWarning: Downloading: https://naturalearth.s3.amazonaws.com/10m_physical/ne_10m_coastline.zip

warnings.warn(f'Downloading: {url}', DownloadWarning)

/home/runner/work/radar-datatree/radar-datatree/.venv/lib/python3.12/site-packages/cartopy/io/__init__.py:242: DownloadWarning: Downloading: https://naturalearth.s3.amazonaws.com/10m_physical/ne_10m_lakes.zip

warnings.warn(f'Downloading: {url}', DownloadWarning)

Radar 101: What Are We Actually Measuring?#

Weather radar works like a flashlight in fog:

Send a pulse: Emits a microwave beam

Hit particles: Raindrops, snowflakes, and bugs scatter energy back

Listen for echoes: Measures what bounces back and how long it took

Why Radar Spins and Tilts#

To see the entire storm, radar rotates 360° at multiple elevation angles:

↑ 19.5° ─────────────→ (High sweep: storm tops)

↑ 10.0° ───────────────→ (Mid-level)

↑ 0.5° ─────────────────→ (Low sweep: near ground)

📡 Radar

Each rotation at one angle is a sweep. Multiple sweeps form a volume scan (a 3D picture of the storm).

Tip

PPI = Plan Position Indicator: The classic radar view—looking down from above at one elevation angle.

What Makes Modern Radar “Polarimetric”?#

Dual-polarization radar (like NEXRAD) sends pulses in two directions (horizontal and vertical) and compares the difference. This reveals particle shape and behavior, helping distinguish rain from hail, snow, bugs, or tornado debris.

Understanding the DataTree Structure#

The Radar DataTree organizes data hierarchically:

/

├── VCP-34/ ← Volume Coverage Pattern 34 ("clear air mode")

│ ├── sweep_0/ ← Lowest elevation (~0.5°)

│ │ ├── DBZH ← Reflectivity

│ │ ├── ZDR ← Differential reflectivity

│ │ ├── RHOHV ← Correlation coefficient

│ │ └── PHIDP ← Differential phase

│ ├── sweep_1/ ← Next elevation (~1.5°)

│ │ └── VELOCITY ← Doppler velocity (not in sweep_0)

│ └── ...

│

├── VCP-212/ ← VCP-212 ("precipitation mode")

│ └── ...

What’s a VCP?#

A Volume Coverage Pattern (VCP) defines scanning strategy:

VCP-34: Clear air mode (14 sweeps, slower, high sensitivity)

VCP-212: Precipitation mode (14 sweeps, faster, rain-optimized)

VCP-12: Severe weather mode (14 sweeps, max time resolution)

Note

The radar automatically switches VCPs based on weather conditions. That’s why you see multiple VCP folders.

# List available Volume Coverage Patterns

print("Available VCPs in this archive:")

for vcp in sorted(dtree.children):

print(f" - {vcp}")

Available VCPs in this archive:

- VCP-34

# Explore VCP-34 structure

print("\nSweeps in VCP-34:")

for sweep in sorted(dtree["VCP-34"].children):

print(f" - {sweep}")

Sweeps in VCP-34:

- georeferencing_correction

- radar_parameters

- sweep_0

- sweep_1

- sweep_2

- sweep_3

- sweep_4

- sweep_5

- sweep_6

- sweep_7

- sweep_8

- sweep_9

# Look inside a single sweep - xarray's beautiful representation

sweep_ds = dtree["VCP-34/sweep_0"].ds

sweep_ds # xarray displays this beautifully in Jupyter

<xarray.DatasetView> Size: 17GB

Dimensions: (vcp_time: 638, azimuth: 720, range: 1832)

Coordinates:

* vcp_time (vcp_time) datetime64[ns] 5kB 2025-12-08T21:04:51 ... ...

* azimuth (azimuth) float64 6kB 0.25 0.75 1.25 ... 359.2 359.8

elevation (azimuth) float64 6kB dask.array<chunksize=(720,), meta=np.ndarray>

time (azimuth) datetime64[ns] 6kB dask.array<chunksize=(720,), meta=np.ndarray>

* range (range) float32 7kB 2.125e+03 2.375e+03 ... 4.599e+05

y (azimuth, range) float64 11MB dask.array<chunksize=(180, 458), meta=np.ndarray>

x (azimuth, range) float64 11MB dask.array<chunksize=(180, 458), meta=np.ndarray>

z (azimuth, range) float64 11MB dask.array<chunksize=(180, 458), meta=np.ndarray>

crs_wkt int64 8B ...

altitude int64 8B ...

longitude float64 8B ...

latitude float64 8B ...

Data variables:

CCORH (vcp_time, azimuth, range) float32 3GB dask.array<chunksize=(1, 720, 1832), meta=np.ndarray>

PHIDP (vcp_time, azimuth, range) float32 3GB dask.array<chunksize=(1, 720, 1832), meta=np.ndarray>

ZDR (vcp_time, azimuth, range) float32 3GB dask.array<chunksize=(1, 720, 1832), meta=np.ndarray>

DBZH (vcp_time, azimuth, range) float32 3GB dask.array<chunksize=(1, 720, 1832), meta=np.ndarray>

RHOHV (vcp_time, azimuth, range) float32 3GB dask.array<chunksize=(1, 720, 1832), meta=np.ndarray>

sweep_number (vcp_time) float64 5kB dask.array<chunksize=(1,), meta=np.ndarray>

sweep_fixed_angle (vcp_time) float32 3kB dask.array<chunksize=(1,), meta=np.ndarray>The Time Dimension: Your Superpower#

Notice the vcp_time dimension? This is where cloud-native radar shines.

Traditional: Loop through 1000 files, download, process.

Cloud-native: Direct time slicing, no loops.

data = dtree['VCP-34/sweep_0'].sel(vcp_time=slice('2025-12-13 14:00', '2025-12-13 16:00'))

Data streams only what you need, when you need it.

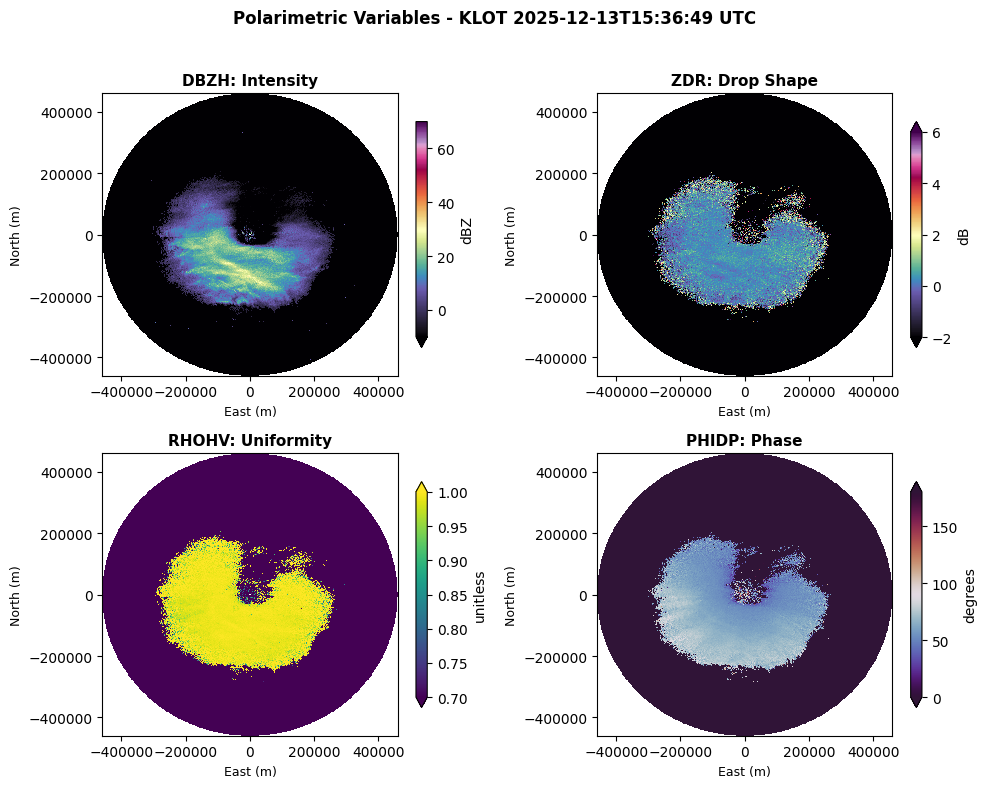

The Four Key Variables#

Dual-polarization radar measures four key variables at the lowest sweep:

Variable |

What It Reveals |

Units |

|---|---|---|

DBZH |

Intensity of precipitation (heavier rain = higher values) |

dBZ |

ZDR |

Particle shape (big raindrops flatten; hail is round) |

dB |

RHOHV |

Purity of precipitation type (0.99+ = pure rain; low = mixed/debris) |

0-1 |

PHIDP |

Cumulative phase shift (used to estimate total rainfall) |

degrees |

Note

Doppler velocity (VRADH) is available in sweep_1 and higher, showing particle motion toward/away from the radar.

Tip

When all four variables tell a consistent story (high Z, high ZDR, high ρ), you can confidently say “heavy rain.” When they contradict (high Z, low ρ), it’s likely hail or tornado debris.

Let’s See All Four#

We’ll visualize a single scan from a December 2025 winter storm:

# Select a single timestamp from December 13, 2025

target_time = "2025-12-13 15:36"

scan = dtree["VCP-34/sweep_0"].sel(vcp_time=target_time, method="nearest")

# Check what time we actually got

actual_time = scan.vcp_time.values

print(f"Selected scan: {actual_time}")

print(f"Elevation angle: {scan.sweep_fixed_angle.values:.2f}°")

Selected scan: 2025-12-13T15:36:49.000000000

Elevation angle: 0.48°

# Create 4-panel visualization of polarimetric variables

fig, axes = plt.subplots(2, 2, figsize=(10, 8))

axes = axes.flatten()

# Configuration for each variable (4 from sweep_0)

variables = [

{

"var": "DBZH",

"cmap": "ChaseSpectral",

"vmin": -10,

"vmax": 70,

"label": "dBZ",

"title": "DBZH: Intensity",

},

{

"var": "ZDR",

"cmap": "ChaseSpectral",

"vmin": -2,

"vmax": 6,

"label": "dB",

"title": "ZDR: Drop Shape",

},

{

"var": "RHOHV",

"cmap": "viridis",

"vmin": 0.7,

"vmax": 1.0,

"label": "unitless",

"title": "RHOHV: Uniformity",

},

{

"var": "PHIDP",

"cmap": "twilight_shifted",

"vmin": 0,

"vmax": 180,

"label": "degrees",

"title": "PHIDP: Phase",

},

]

for idx, config in enumerate(variables):

var = config["var"]

scan[var].plot(

ax=axes[idx],

x="x",

y="y",

cmap=config["cmap"],

vmin=config["vmin"],

vmax=config["vmax"],

add_colorbar=True,

cbar_kwargs={"label": config["label"], "shrink": 0.8},

)

axes[idx].set_title(config["title"], fontsize=11, fontweight="bold")

axes[idx].set_xlabel("East (m)", fontsize=9)

axes[idx].set_ylabel("North (m)", fontsize=9)

fig.suptitle(

f"Polarimetric Variables - KLOT {str(actual_time)[:19]} UTC",

fontsize=12,

fontweight="bold",

y=0.98,

)

plt.tight_layout(rect=[0, 0, 1, 0.96])

plt.show()

Tip

Don’t interpret variables in isolation. High DBZH could be rain or hail—you need ZDR and RHOHV together to distinguish them.

Time-Based Selection: The Killer Feature#

Traditional radar analysis requires looping through files. With the DataTree, select by time.

Select a Single Scan#

Use method="nearest" to find the closest scan to your target:

# What was happening at 3:30 PM on December 13?

afternoon_scan = dtree["VCP-34/sweep_0"].sel(

vcp_time="2025-12-13 15:30", method="nearest"

)

print("Requested: 2025-12-13 15:30 UTC")

print(f"Actual scan: {afternoon_scan.vcp_time.values}")

print(f"Variables: {list(afternoon_scan.data_vars)}")

Requested: 2025-12-13 15:30 UTC

Actual scan: 2025-12-13T15:27:48.000000000

Variables: ['CCORH', 'PHIDP', 'ZDR', 'DBZH', 'RHOHV', 'sweep_number', 'sweep_fixed_angle']

Select a Time Range#

Want to analyze 2 hours of data? Use slice():

# Get all scans from 2 PM to 4 PM

two_hours = dtree["VCP-34/sweep_0"].sel(

vcp_time=slice("2025-12-13 14:00", "2025-12-13 16:00")

)

two_hours.ds

<xarray.DatasetView> Size: 375MB

Dimensions: (vcp_time: 13, azimuth: 720, range: 1832)

Coordinates:

* vcp_time (vcp_time) datetime64[ns] 104B 2025-12-13T14:06:37 ......

* azimuth (azimuth) float64 6kB 0.25 0.75 1.25 ... 359.2 359.8

elevation (azimuth) float64 6kB dask.array<chunksize=(720,), meta=np.ndarray>

time (azimuth) datetime64[ns] 6kB dask.array<chunksize=(720,), meta=np.ndarray>

* range (range) float32 7kB 2.125e+03 2.375e+03 ... 4.599e+05

y (azimuth, range) float64 11MB dask.array<chunksize=(180, 458), meta=np.ndarray>

x (azimuth, range) float64 11MB dask.array<chunksize=(180, 458), meta=np.ndarray>

z (azimuth, range) float64 11MB dask.array<chunksize=(180, 458), meta=np.ndarray>

crs_wkt int64 8B ...

altitude int64 8B ...

longitude float64 8B ...

latitude float64 8B ...

Data variables:

CCORH (vcp_time, azimuth, range) float32 69MB dask.array<chunksize=(1, 720, 1832), meta=np.ndarray>

PHIDP (vcp_time, azimuth, range) float32 69MB dask.array<chunksize=(1, 720, 1832), meta=np.ndarray>

ZDR (vcp_time, azimuth, range) float32 69MB dask.array<chunksize=(1, 720, 1832), meta=np.ndarray>

DBZH (vcp_time, azimuth, range) float32 69MB dask.array<chunksize=(1, 720, 1832), meta=np.ndarray>

RHOHV (vcp_time, azimuth, range) float32 69MB dask.array<chunksize=(1, 720, 1832), meta=np.ndarray>

sweep_number (vcp_time) float64 104B dask.array<chunksize=(1,), meta=np.ndarray>

sweep_fixed_angle (vcp_time) float32 52B dask.array<chunksize=(1,), meta=np.ndarray>Important

Lazy Evaluation Magic

No data was downloaded. You only loaded metadata. Actual measurements stream on-demand when you call .plot(), .compute(), or perform calculations.

You can set up complex analyses before committing to any data transfer.

Time Travel with Icechunk: Git for Radar Data#

Icechunk isn’t just a storage layer—it’s version control for scientific data:

Every data update creates a new snapshot (commit)

View commit history with

.ancestry()Time travel to any previous version

Changes are ACID-compliant

Why This Matters#

Note

Reproducibility: Reference an exact data snapshot in your paper. Future researchers can load exactly the same data—even if the archive has been updated.

View the Commit History#

# View the last 3 commits (snapshots) to the radar archive

for i, snapshot in enumerate(repo.ancestry(branch="main")):

print(f"#{i}: {snapshot.id}")

print(f" Date: {snapshot.written_at.strftime('%Y-%m-%d %H:%M:%S UTC')}")

print(f" Msg: {snapshot.message[:80] if snapshot.message else 'No message'}")

print()

if i >= 3: # Show last 10 snapshots

print("... (more snapshots)")

break

#0: TH7KSXWYZ9HHYAPQQH1G

Date: 2025-12-17 21:00:44 UTC

Msg: file: s3://unidata-nexrad-level2/2025/12/14/KLOT/KLOT20251214_011542_V06 added

#1: RTA051CXAJ4NG0HZ2790

Date: 2025-12-17 20:43:03 UTC

Msg: file: s3://unidata-nexrad-level2/2025/12/14/KLOT/KLOT20251214_010641_V06 added

#2: TAWHFJR7HZZGJAARFR50

Date: 2025-12-17 20:35:08 UTC

Msg: file: s3://unidata-nexrad-level2/2025/12/14/KLOT/KLOT20251214_005739_V06 added

#3: NG2BQYHBMNG4M1TWR8H0

Date: 2025-12-17 20:30:33 UTC

Msg: file: s3://unidata-nexrad-level2/2025/12/14/KLOT/KLOT20251214_004837_V06 added

... (more snapshots)

Time Travel for Reproducibility#

Each snapshot ID represents the exact state of the data at that moment:

Reproducible science: Reference a specific snapshot in publications

Data provenance: Track how the archive evolved

Debugging: Compare current data to historical versions

Tip

Include the snapshot ID in your methods section. Anyone can reproduce your analysis by loading that specific commit.

Summary: What You’ve Learned#

Connected to 92 GB of radar data in ~3-5 seconds

Understood how radar works and what it measures

Navigated a hierarchical VCP → sweep → variable structure

Visualized four polarimetric variables

Selected data by time (no file iteration)

Explored version history with Icechunk

Key Takeaways#

Cloud-native = speed: Metadata loads instantly, data streams on-demand

Select by time: No more looping through files

Four variables together: Combined interpretation reveals precipitation type

Reproducibility built-in: Icechunk snapshots preserve exact data states

Next Steps#

2. QVP Workflow Comparison#

36x speedup over traditional file-based workflows

Reproduce a published figure from Ryzhkov et al. (2016)

3. QPE Snow Storm#

Compute snow accumulation during the December 2025 Illinois storm

Use Z-R relationships for quantitative precipitation estimation

Challenge Yourself

Find the strongest echo: What’s the max DBZH on December 13?

Compare VCPs: How do sweep angles differ between VCP-34 and VCP-212?

Detect rotation: Use VELOCITY to identify opposing velocities

Open Research Questions & Community Challenges#

The Radar DataTree framework makes decades of weather radar data instantly accessible — but many of the most exciting scientific questions remain wide open. Here are ambitious research directions where your contributions could make a real impact:

1. AI/ML for Radar Applications#

Can structured, FAIR-compliant radar archives enable training deep learning models for storm classification, hail prediction, or nowcasting — without requiring custom ETL pipelines for each experiment? Cloud-native access to analysis-ready data could dramatically lower the barrier to building reproducible ML benchmarks for severe weather.

2. Long-Term Climate Analysis#

NEXRAD has been operating since the 1990s, generating one of the longest high-resolution precipitation records on Earth. Can decades of radar data reveal trends in precipitation extremes, storm frequency, or convective behavior across the U.S.? Making this archive cloud-native opens the door to continental-scale climate studies that were previously impractical.

3. Ecological Applications (Aeroecology)#

Weather radar doesn’t just see rain — it captures birds, bats, and insects. Can cloud-native radar archives support continental-scale migration tracking or aeroecology studies? The same infrastructure built for meteorology could transform our understanding of animal movement across hemispheres.

4. Global Radar Interoperability#

There are 800+ weather radars worldwide, but fewer than 20% have openly accessible data. How can we build cross-border, FAIR-aligned radar mosaics for flood forecasting, hemispheric reanalysis, or global precipitation monitoring? Standardizing on formats like Zarr v3 and DataTree could be a path toward true interoperability.

5. Education & Accessibility#

Can cloud-native radar data lower the barrier so that students and educators can work with real 4D atmospheric observations without downloading petabytes? If a student can connect to a live radar archive in 5 seconds (as you just did above), what new classroom experiences and research projects become possible?

See also

For deeper context on these challenges, see: The Untapped Promise of Weather Radar Data (Earthmover blog).

Citation#

If you use this data or framework in your research, please cite:

Ladino-Rincón, A., & Nesbitt, S. W. (2025). Radar DataTree: A FAIR and Cloud-Native Framework for Scalable Weather Radar Archives. arXiv:2510.24943. doi:10.48550/arXiv.2510.24943

Tutorial created by the Radar DataTree team