Scientific Showcase: Reproducing Published Radar Science in 60 Seconds#

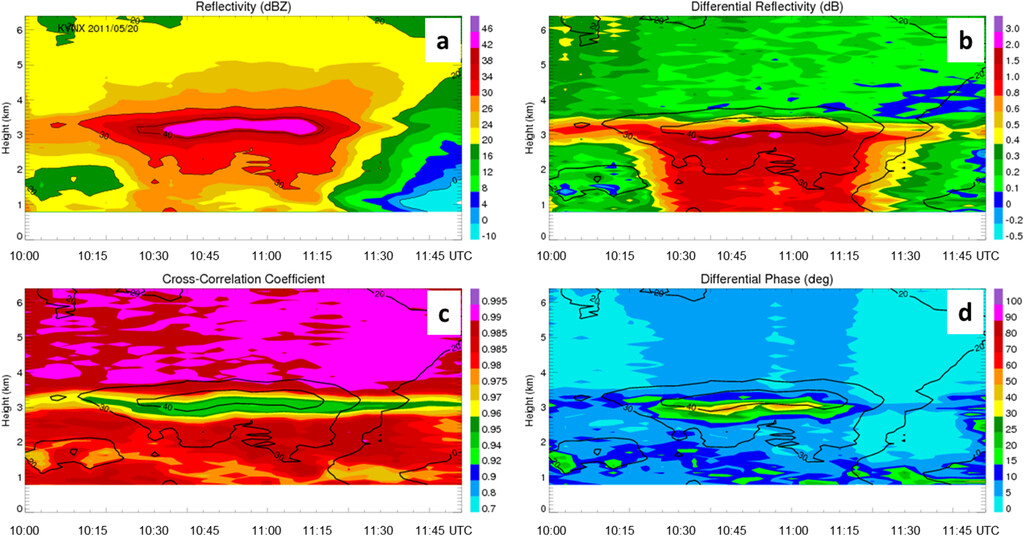

Courtesy: Ryzhkov et al. (2016)

Important

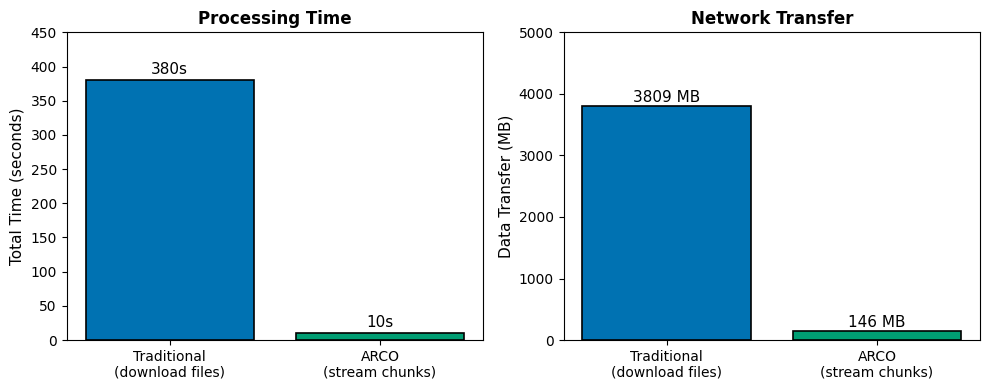

Traditionally: Reproducing this figure takes ~6 minutes (380 seconds) of processing time:

Download 809 MB of compressed NEXRAD files

Decode 55 files from binary format

Load all 17 elevation sweeps (even though we only need 1)

Concatenate and process

With Analysis-Ready Cloud-Optimized (ARCO) data: ~10 seconds

Stream only the chunks you need

No downloads, no file decoding

36x faster, 5.5x less data transfer

In this notebook, you’ll learn how the Radar DataTree framework enables this dramatic speedup—and reproduce this exact scientific figure yourself.

Note

Prerequisites

This notebook assumes you’re familiar with the basics covered in 1. Getting Started with Radar DataTree:

Connecting to cloud storage with Icechunk

Opening a DataTree and navigating the VCP/sweep hierarchy

Time-based selection with

.sel()

If you’re new to Radar DataTree, start there first.

What is a Quasi-Vertical Profile (QVP)?#

Before diving into the workflow comparison, let’s understand what we’re computing.

The Concept: A Merry-Go-Round Analogy#

Imagine standing in the center of a merry-go-round during a rainstorm. As you spin, you hold out your hand at different heights to measure how much rain hits it at each level. By averaging all the measurements from your complete rotation, you get a sense of the “typical” rainfall at that height, smoothing out any variations from specific directions.

That’s essentially what QVP does with radar data.

How It Works#

At high elevation angles (e.g., 19.5°), the radar beam rises quickly with range:

↗ Beam at 19.5° elevation (sweep_16)

/ Height increases rapidly with range

/ (~3 km height at 10 km range)

/

[RADAR]

By averaging all azimuth angles (360° rotation) at each range gate, we collapse horizontal variability and create a vertical profile:

where:

\(r\) is range (converted to height using elevation angle)

\(\theta\) is azimuth angle

\(t\) is time

\(Z\) is any radar variable (reflectivity, differential reflectivity, etc.)

Why QVPs Matter#

QVPs reveal critical atmospheric processes:

Melting layer detection: Where snow turns to rain

Precipitation structure: Ice crystal growth zones, aggregation layers

Storm evolution: Time-height displays show how precipitation processes change

The figure we’ll reproduce shows 4 hours of QVPs during a mesoscale convective system—revealing the vertical structure and temporal evolution of the storm.

Study Parameters#

To reproduce Ryzhkov et al. (2016) Figure 4, we’ll process:

Radar: KVNX (Vance Air Force Base, Oklahoma)

Event: MC3E field campaign mesoscale convective system

Date: May 20, 2011

Time Window: 08:30 - 12:30 UTC (4 hours)

Elevation: 19.5° (highest WSR-88D elevation, sweep_16)

Variables: DBZH (reflectivity), ZDR (differential reflectivity), RHOHV (correlation coefficient), PHIDP (differential phase)

Traditional Workflow: The Challenge#

The conventional approach to accessing this 4-hour window of radar data requires:

Discover 55 files in the time range from AWS S3

Download ~809 MB of compressed NEXRAD Level II files

Decode each file from binary format (loading all 17 sweeps, all variables)

Extract only the highest elevation sweep we need

Concatenate along time dimension

Compute QVPs

Benchmark Results#

(From full execution on standard laptop)

Step |

Time |

|---|---|

File discovery |

~1s |

Download + decode 55 files |

~375s |

Concatenation |

~2s |

QVP computation |

~2s |

Total |

~380s (6.3 min) |

Peak RAM usage: ~3.3 GB (loading all sweeps just to use one)

Tip

See QVP-Workflow-Benchmark.ipynb in this directory for the full traditional workflow implementation and timing details.

ARCO Workflow: Data Streaming with Radar DataTree#

The cloud-native approach eliminates the download-decode-process pipeline:

Connect: Open Icechunk repository (metadata only, <1 second)

Navigate: Browse the hierarchical VCP/sweep structure

Select: Slice by time dimension (lazy, no data transfer yet)

Stream: Data chunks fetched on-demand during computation

Important

Key advantage: No file downloads, no decoding—data streams directly from cloud storage. You only transfer the exact chunks you need.

Let’s reproduce the Ryzhkov figure and measure performance.

import sys

import warnings

from pathlib import Path

warnings.filterwarnings("ignore", category=FutureWarning)

# Ensure demo_functions is importable (needed when executed from docs/)

sys.path.insert(0, str(Path("../notebooks").resolve()))

import cmweather # noqa: F401

import icechunk as ic

import matplotlib.pyplot as plt

import xarray as xr

from demo_functions import compute_qvp, get_repo_config, ryzhkov_figure

# Study parameters

RADAR = "KVNX"

START_TIME = "2011-05-20 08:30"

END_TIME = "2011-05-20 12:30"

VARIABLES = ["DBZH", "ZDR", "RHOHV", "PHIDP"]

print(f"xarray: {xr.__version__}")

print(f"icechunk: {ic.__version__}")

xarray: 10000.dev6383+g8cf3ad708

icechunk: 2.0.0a0

Step 1: Connect to Icechunk Repository#

We’ll connect to the KVNX radar data on the Open Storage Network (OSN). This is metadata-only—no actual radar data is transferred yet.

%%time

# Configure S3-compatible storage (Open Storage Network)

storage = ic.s3_storage(

bucket="nexrad-arco",

prefix="KVNX",

endpoint_url="https://umn1.osn.mghpcc.org",

anonymous=True,

force_path_style=True,

region="us-east-1",

)

repo = ic.Repository.open(storage, config=get_repo_config())

session = repo.readonly_session("main")

print("Connected to Icechunk repository")

Connected to Icechunk repository

CPU times: user 47.2 ms, sys: 6.16 ms, total: 53.4 ms

Wall time: 381 ms

Step 2: Open DataTree#

Opening the DataTree is a lazy operation—it reads metadata about the structure but doesn’t load any actual data arrays yet.

%%time

# Open DataTree (lazy - no data loaded yet, only metadata)

dtree = xr.open_datatree(

session.store,

engine="zarr",

zarr_format=3,

consolidated=False,

chunks={},

max_concurrency=5,

)

print("DataTree opened (lazy)")

DataTree opened (lazy)

CPU times: user 2.41 s, sys: 527 ms, total: 2.94 s

Wall time: 7.33 s

Step 4: Select Time Range and Compute QVPs#

Unlike the traditional workflow, we can directly slice by time without iterating through files. Data is fetched on-demand during computation.

Tip

The .sel() operation is still lazy—no data transfer happens until we call .compute(). This allows you to build complex analysis pipelines before triggering any network operations.

# Select 4-hour window (still lazy - no data fetched)

ds_qvp_selected = ds_qvp.sel(

vcp_time=slice(START_TIME.replace(" ", "T"), END_TIME.replace(" ", "T"))

)

print(f"Selected {len(ds_qvp_selected.vcp_time)} timesteps")

print(

f"Time range: {str(ds_qvp_selected.vcp_time.min().values)[:19]} to {str(ds_qvp_selected.vcp_time.max().values)[:19]} UTC"

)

Selected 55 timesteps

Time range: 2011-05-20T08:33:14 to 2011-05-20T12:29:11 UTC

%%time

# Compute QVPs (data streams on-demand during this step)

qvp_data = {}

for var in VARIABLES:

if var in ds_qvp_selected.data_vars:

qvp_data[var] = compute_qvp(ds_qvp_selected, var=var).compute()

print(f" Computed QVP for {var}")

print(f"\nQVPs computed for {len(qvp_data)} variables")

Computed QVP for DBZH

Computed QVP for ZDR

Computed QVP for RHOHV

Computed QVP for PHIDP

QVPs computed for 4 variables

CPU times: user 1.18 s, sys: 172 ms, total: 1.35 s

Wall time: 3.62 s

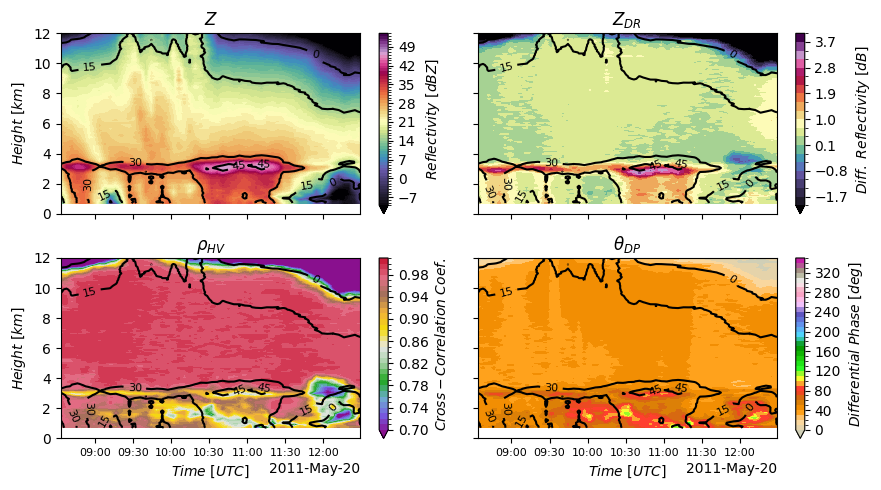

Reproducing Ryzhkov et al. (2016) Figure 4#

Now let’s visualize the QVPs as time-height cross-sections. This 4-panel figure shows the vertical structure and temporal evolution of the storm’s polarimetric signatures.

ryzhkov_figure(qvp_data["DBZH"], qvp_data["ZDR"], qvp_data["RHOHV"], qvp_data["PHIDP"])

plt.savefig("ryzhkov_qvp_reproduction.png", dpi=150, bbox_inches="tight")

plt.show()

Scientific Interpretation: Reading the QVPs#

You just reproduced a published figure. Here’s what the four panels reveal about storm structure:

Panel |

Variable |

What It Shows |

Key Feature |

|---|---|---|---|

Upper Left |

Z (Reflectivity) |

Energy scattered by precipitation |

Bright band at ~3–4 km marks the melting layer; stronger echoes aloft from ice aggregates |

Upper Right |

Z_DR (Diff. Reflectivity) |

Particle shape (horizontal vs. vertical) |

High values above melting layer from large, flat snowflakes; near-zero below from spherical raindrops |

Lower Left |

ρ_HV (Correlation Coeff.) |

Particle-type uniformity |

Dip at melting layer where mixed-phase particles (snow + melt + rain) reduce correlation |

Lower Right |

Φ_DP (Diff. Phase) |

Cumulative phase shift through precipitation |

Accumulates with range; robust against calibration errors, useful for QPE |

The Big Picture#

This storm shows classic stratiform structure: ice formation aloft (5–8 km), a well-defined melting layer (3–4 km), and rain below (0–3 km). The temporal evolution reveals this structure persisting for ~4 hours — a signature of organized mesoscale convective systems.

Performance Comparison#

Let’s quantify the speedup we achieved with the ARCO approach.

Results Summary#

Metric |

Traditional |

ARCO Streaming |

Improvement |

|---|---|---|---|

Total Time |

~380s (6.3 min) |

~10s |

36x faster |

Network Transfer |

~3.8 GB |

~146 MB |

26x less |

Peak RAM |

~3.3 GB |

~150 MB |

22x less |

Sweeps Loaded |

All 17 per file |

Only 1 (sweep_16) |

Selective |

Variables Loaded |

All ~8 per sweep |

Only 4 selected |

Selective |

Why ARCO is Faster#

Selective Access: ARCO streams only 4 variables from 1 sweep. Traditional workflow downloads all 17 sweeps and ~8 variables per file—then discards most of it.

No Decode Overhead: ARCO data is already in Zarr format (optimized for cloud access). Traditional files must be decompressed and decoded from binary format.

Native Time Indexing: ARCO supports direct time slicing with

.sel(vcp_time=...). Traditional workflow requires parsing filenames and iterating through files.Chunk-Optimized Storage: Data is stored in chunks aligned with typical access patterns (time, azimuth, range), minimizing unnecessary reads.

# Visual comparison

traditional_time = 380 # seconds (from benchmark)

arco_time = 10 # seconds (approximate from above)

traditional_mb = 3809 # MB downloaded

arco_mb = 146 # MB streamed

fig, (ax1, ax2) = plt.subplots(1, 2, figsize=(10, 4))

categories = ["Traditional\n(download files)", "ARCO\n(stream chunks)"]

colors = ["#0072B2", "#009E73"]

# Time comparison

bars1 = ax1.bar(

categories,

[traditional_time, arco_time],

color=colors,

edgecolor="black",

linewidth=1.2,

)

ax1.set_ylabel("Total Time (seconds)", fontsize=11)

ax1.set_title("Processing Time", fontsize=12, fontweight="bold")

ax1.set_ylim(0, 450)

for bar, val in zip(bars1, [traditional_time, arco_time], strict=False):

ax1.text(

bar.get_x() + bar.get_width() / 2,

bar.get_height() + 5,

f"{val}s",

ha="center",

va="bottom",

fontsize=11,

)

# Data transfer comparison

bars2 = ax2.bar(

categories,

[traditional_mb, arco_mb],

color=colors,

edgecolor="black",

linewidth=1.2,

)

ax2.set_ylabel("Data Transfer (MB)", fontsize=11)

ax2.set_title("Network Transfer", fontsize=12, fontweight="bold")

for bar, val in zip(bars2, [traditional_mb, arco_mb], strict=False):

ax2.text(

bar.get_x() + bar.get_width() / 2,

bar.get_height() + 10,

f"{val} MB",

ha="center",

va="bottom",

fontsize=11,

)

ax2.set_ylim(0, 5000)

plt.tight_layout()

plt.savefig("workflow_comparison.png", dpi=150, bbox_inches="tight")

plt.show()

print(f"\nSpeedup: {traditional_time / arco_time:.0f}x faster")

print(f"Data reduction: {traditional_mb / arco_mb:.1f}x less network transfer")

Speedup: 38x faster

Data reduction: 26.1x less network transfer

The Real Advantage: Cloud-Native Architecture#

The speedup you saw isn’t just about being “faster”—it’s about a fundamentally different architecture that unlocks new possibilities.

Why ARCO Scales Differently#

Traditional (download-decode-process) |

ARCO (stream-on-demand) |

|---|---|

Download entire files to disk |

Stream only needed chunks |

Decode all 17 sweeps, all variables |

Access single sweep, selected variables |

Linear with file count |

Sub-linear with elastic compute |

Limited by local disk I/O |

Network-parallel from cloud |

Key Benefits Beyond Speed#

Elastic Computing: Spin up 100 workers and process a year of data in parallel—impossible with file-based workflows

Network Optimization: Only transfer the exact bytes you need. The 5.5x data reduction compounds at scale.

No Storage Overhead: No need to download/store files locally. Stream directly from cloud to memory.

Reproducibility: Icechunk snapshots ensure exact data versioning—critical for scientific workflows.

Important

The paradigm shift: Traditional workflows are file-centric (process one file at a time). ARCO is analysis-centric (define your query, let the system optimize data access). This enables workflows that would be impractical with traditional approaches—like computing 30-year climatologies or training ML models on continental-scale radar archives.

Reproducibility: Science You Can Trust#

Beyond speed, the Radar DataTree framework enhances scientific reproducibility through Icechunk’s version control.

Exact Reproduction with Snapshot IDs#

Every commit to an Icechunk repository generates a unique snapshot ID. Anyone with this ID can access the exact data state you used—even if the repository has been updated since.

# Example: Open a specific snapshot

snapshot_id = "abc123..." # Snapshot ID from your analysis

session = repo.readonly_session(snapshot_id)

dtree = xr.open_datatree(session.store, ...)

This enables:

Exact figure reproduction: Future researchers can reproduce your exact results

Data provenance: Track which data versions were used in publications

Time-travel: Compare current data to previous versions

Collaborative research: Share specific data states with collaborators

FAIR Principles in Action#

The Radar DataTree framework embodies FAIR data principles:

Findable: Cloud storage with metadata-rich DataTree structure

Accessible: HTTP access via standard protocols (S3 API, Zarr format)

Interoperable: NetCDF/CF conventions, xarray integration

Reusable: Version control, clear licensing, comprehensive documentation

Tip

For publishers: Include snapshot IDs in your paper’s data availability statement. This ensures anyone can reproduce your exact analysis—strengthening peer review and enabling follow-up research.

Key Takeaways#

When to Use Each Approach#

Use Case |

Recommended |

|---|---|

Exploring a single local file |

Traditional |

Time-series analysis |

ARCO |

Multi-month processing |

ARCO |

Reproducible research |

ARCO (Icechunk versioning) |

Machine learning training |

ARCO (high-throughput) |

Real-time monitoring |

ARCO (selective access) |

Multi-year climatologies |

ARCO (scalability) |

What You Learned#

In this notebook, you:

Reproduced a published scientific figure in ~10 seconds (36x faster than traditional)

Learned how QVPs reveal precipitation microphysics through polarimetric signatures

Interpreted melting layers, aggregation zones, and storm structure from time-height displays

Explored the ARCO workflow: connect → navigate → select → stream

Understood how selective access and cloud-optimized formats enable dramatic speedups

Discovered how Icechunk versioning enables exact reproducibility

Challenge Yourself#

Try These Extensions

Compare different storms: Analyze QVPs from other MC3E cases. How do polarimetric signatures differ between convective vs. stratiform precipitation?

Multi-elevation QVPs: Compute QVPs at multiple elevation angles (e.g., sweep_8, sweep_12, sweep_16). How does beam height affect the observed signatures?

Quantitative analysis: Extract the melting layer height from the ρ_HV minimum. How does it change over time? Does it correlate with surface temperature?

Compare radars: Process the same time period from a nearby radar (e.g., KTLX). Do you see consistent features?

Long-term climatology: Compute monthly-averaged QVPs for an entire year. What seasonal patterns emerge in precipitation structure?

References#

Primary Reference:

Ryzhkov, A., et al., 2016: Quasi-Vertical Profiles—A New Way to Look at Polarimetric Radar Data. J. Atmos. Oceanic Technol., 33, 551–562, https://doi.org/10.1175/JTECH-D-15-0020.1.

Cloud-Native Data:

Abernathey, R.P., et al., 2021: Cloud-Native Repositories for Big Scientific Data. Comput. Sci. Eng., 23, 26–35, https://doi.org/10.1109/MCSE.2021.3059437.

Radar DataTree Framework:

Ladino-Rincón, A., & Nesbitt, S. W., 2025: Radar DataTree: A FAIR and Cloud-Native Framework for Scalable Weather Radar Archives. arXiv preprint, arXiv:2510.24943.